Lifelong Programmer Thinks about Artificial Intelligence

I started programming over half a century ago. AI is cool - but not THAT cool.

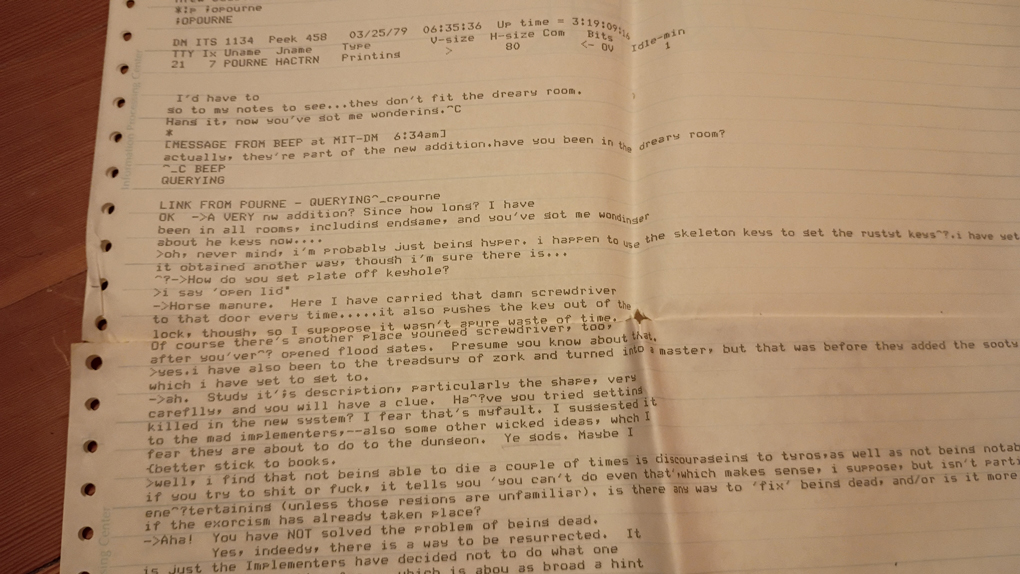

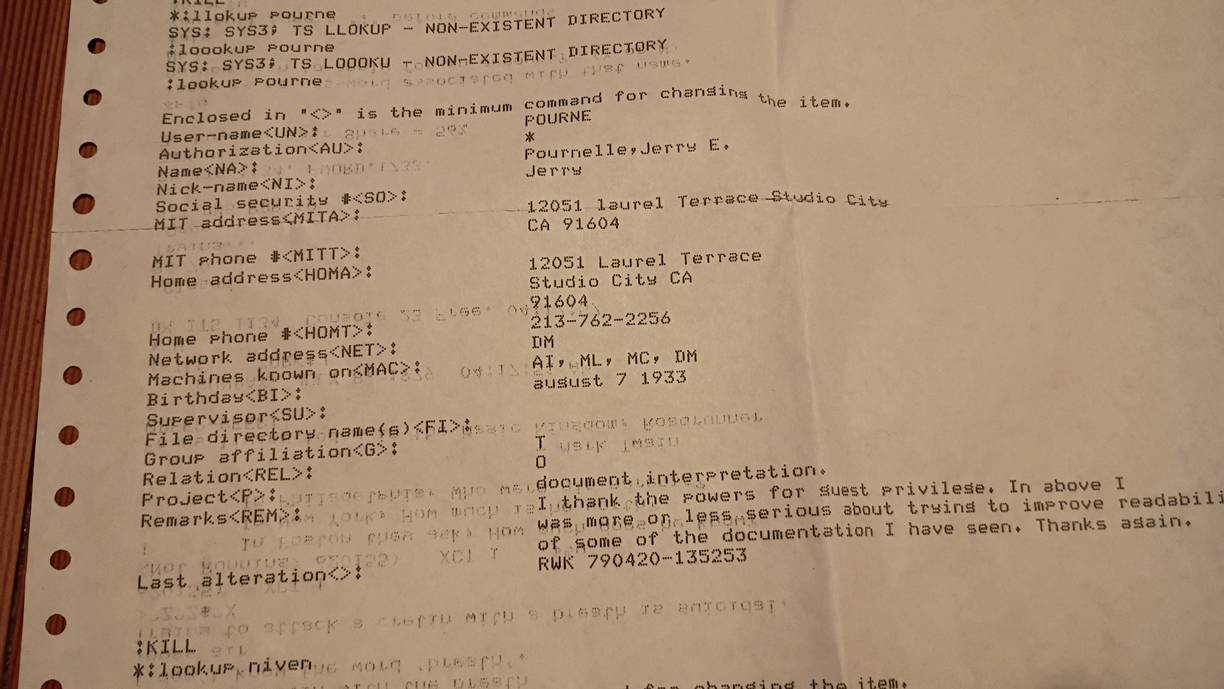

Jerry Pournelle and I chatting about Zork in 1979

[If viewing on your phone, enable Auto-rotate]

AI has been around for 70 years, through three generations. Its current pervasiveness and utility are undeniable. Its limitations and dangers, beyond the effects on jobs, are not nearly as well known. As a software engineer and lifetime programmer, I have some insights to share.

MY LIFE AS A PROGRAMMER

I started programming long ago, in 1975. I wrote a lunar lander program in Basic on a teletype terminal (probably ASR-33) to a minicomputer (PDP-8) at Hillsboro High School. My first programming class at MIT was in FORTRAN, using punch cards. The Internet’s predecessor, Arpanet, was at MIT already. I spent too many hours playing Zork, and chatting with people like Science Fiction legend Jerry Pournelle; I still have the green bar paper output from one of our chats in 1979. Of course, it wasn’t on a phone. To be online I had to be at the LA 36 terminal in my dormitory.

In 1981, I had a job as a computer operator for the EPA, backing up PDP-11 data onto reel-to-reel tapes and swapping out Bundt-cake sized hard disk arrays that contained… five megabytes. By 1985 I had a job as a business manager, with a 640K RAM i80186 [yes, 80186] PC clone running DOS 3.1 with a 30 MB hard drive.

In 1995 I designed and built my first website for Natural MicroSystems. I spent most of the next quarter century designing and building custom web applications for in-house use by Intel, PGE, Vestas, Nike, FEI, and Radisys. I have been a full stack engineer, which means the back end SQL database, the front end user interface, and the middleware connecting the two.

While certainly not global in breadth, my knowledge and experience of programming is as deep as it gets. One upside is that my critical thinking and logic skills are rock-solid: computers always do exactly what you TELL them to do. Logic has not changed in the last 50 years; if-then-else reasoning is the heartbeat of all computer code.

The core of database design, normalization, has been around since the early 90s. So have object oriented design, HTML, and JavaScript. There are constantly new layers of abstraction and new class libraries that automate some previously hand-coded functionality and use increasing CPU and storage capabilities, allowing higher and higher levels of custom programming although the added complexity can be a challenge to manage. The art, the hard work, is getting computers to do what you WANT them to do. Computers are relentlessly logical. One downside is that all the time I have spent on a computer has made me hypersensitive to ambiguity, even when I’m away from the computer talking to other people, sometimes annoying them with too many questions to clarify and ease my anxiety.

When designing anything, we always started with problem definition, involving all stakeholders to ensure that all relevant issues were on the table to be scoped and prioritized in time and money. We documented what we did, rigorously tested it, and rolled it out.

I got an AOL account in 1994. While I didn’t get a cell phone until 2008, a Blackberry, I have had an Android phone for the last several years, currently a V16, 5G phone that I got last year. It has an incredible, Star Trek range of features in its hardware and software: 64 GB storage, phone, camera, calculator, radio receiver, maps & GPS, clock, translator, web browser, tuner & metronome, and myriad other applications to manage my banking, Meetup hikes, and Trailblazers season tickets. But by default it updates all the apps in the background, unannounced, with minimal documentation, if any, about new features. A consequence is that often an app I’ve been using for months or years rearranges its features, or deletes them. I have to spend time, often at inopportune moments, figuring out how to do something that I already knew how to do in the app. I’m having to run faster and faster to stay in place. If I turn off the automatic updates, I get annoying pop-ups urging me to upgrade until the old version is de-supported and fails to work altogether. While a long-term problem, the upgrade cycle is the software version of pebbles in your shoes – annoying, but rarely causing serious harm. It does take mindshare, though, that used to be spent elsewhere in real life.

ARTIFICIAL INTELLIGENCE

The field of Artificial Intelligence (AI) research was founded at a workshop at Dartmouth College in 1956. MIT’s AI Lab was founded in 1959. AI’s first season ended in the early 1970s as being beyond what computers could do at the time. In the early 1980s, it came back as expert systems, with a lot of programmed decision trees, but maxxed out and faded again by 1990. AI 3.0 took off around 2012 with Deep Learning and artificial general intelligence (AGI). Since 2022 we have large language models, which are very popular and pervasive. They’re so pervasive that they are activated by default, and one has to opt out – if that’s even possible.

While helpful in many circumstances, they can be gamed. As US Senate candidate from Texas, James Talarico, says on his website, “Social media was created to bring us together and keep us informed, but it has evolved into something else. Predatory algorithms elevate the most extreme voices on all sides to provoke our outrage, get more clicks, and make more money. It’s the rage economy. The billionaires who own these platforms are engineering our emotions so they can profit off our pain. We have to do something about it.”

Per electoral-vote.com, I’d like us to “advocate and pass laws assigning legal liability when AI gets something wrong. For example, if a patient is ‘examined’ by an AI doctor at a hospital and the AI doctor makes a mistake and the patient is injured or dies, the law could make it clear that malpractice laws and civil lawsuits most definitely apply to AI doctors, too. In fact, the law could state that any time any AI bot causes injuries to anyone, the organization using the bots has the full legal liability that a human would have under the same circumstances and the organization using the AI can’t pass the blame off to the makers of the AI software. This liability will slow the adoption of AI bots as managers will worry about getting sued if the bots make mistakes.”

Deeper still, AI does analysis, not synthesis. It can connect pre-existing dots. It can interpolate. It can extrapolate. But it can also be gamed. It absolutely cannot anticipate the nonlinearities of life – the effects of running out of air, water, or food; the effects of a system collapsing; the effects of melting an ice sheet, or provoking someone into losing their temper. Most of the time an AI system can handle what a person can handle cognitively. But when it fails, it fails more spectacularly than humans, because humans can bend, can look around the corner, can synthesize new information. AI can only analyze the existing information. Ultimately humans are essential to backstop AI, no matter how sophisticated the code is, because other humans wrote the code, and can’t anticipate everything.